👋 Hi, I'm

📱 Please use a big screen to read this website as small size hides all artistic images ✨

"Create and build whenever I can"

Learning to act in pixels

We perceive in order to act, and we act in order to perceive. — J.J. Gibson

My work starts from a simple premise: many agents meet the world through pixels. I study how those visual streams can support action, not just recognition, so a model can decide what to do next, recover from mistakes, and stay grounded in what it actually sees.

That perspective connects my projects from AR assistance to today's multimodal foundation models: building systems that turn pixels into physical actions, interactive guidance, or trustworthy reasoning for humans in the loop.

Services & Tools

A personal toolkit of research and playful services designed to support academic work with a touch of fun.

掐指能算半边天

Traditional Chinese fortune-telling algorithms with fun, relaxed predictions.

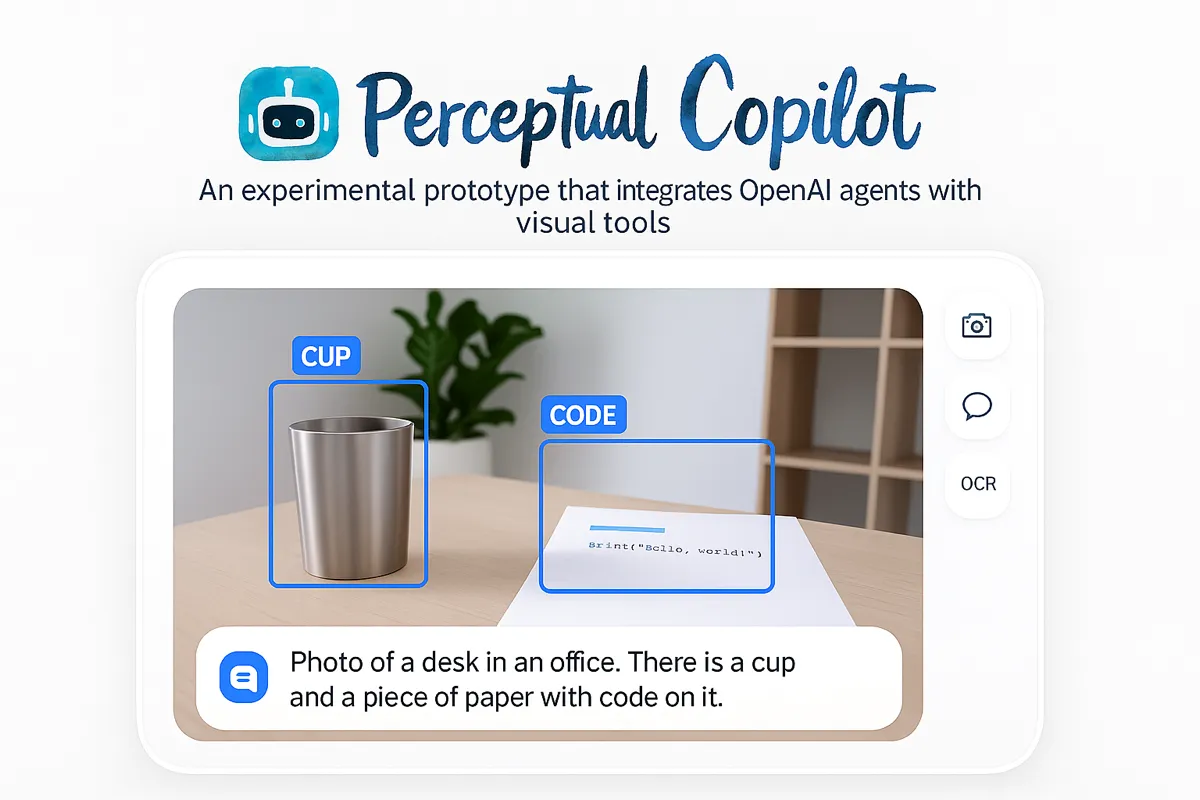

Perceptual Copilot

An experimental prototype that integrates OpenAI agents with visual tools to process real-time video streams.

Re:Read

More insights with less effort

Uptime

Continuous monitoring of jing.vision service uptime.

Infra

A rebranded infrastructure landing page for multimodal systems, deployment, and integration.

Re:Search

Re:Search on the optimized path

Article

A research API for structured paper extraction, indexing, and automation workflows built around predictable schemas.

Research & Projects

A collection of projects featuring research with artistic cover images and demos focused on real-world system design and applications.

Does Reasoning Improve Seeing? Understanding When Vision-Language Models Benefit from Thinking

Jing Bi, Luchuan Song, Dingxing Zhang, Pinxin Liu, Guangyu Sun, Lianggong Bruce Wen, Weidong Cai, Chen Chen, Chenliang Xu

An ICML 2026 paper studying when vision-language models benefit from explicit reasoning, and when extra thinking improves or interferes with visual understanding.

Embodied Agentic Runtime

Jing Bi, Dinxing Zhang, Tong Liu, Gai Liu, Zhang Liu, Bruce Wen

A visual-first runtime for embodied agents that learn from pixel streams, align with human intent, and support grounded reasoning, planning, and intervention in real-world settings.

When to Think and When to Look: Uncertainty-Guided Lookback

Jing Bi, Filippos Bellos, Junjia Guo, Yayuan Li, Chao Huang, Yolo Y. Tang, Luchuan Song, Susan Liang, Zhongfei Mark Zhang, Jason J. Corso, Chenliang Xu

A study of test-time thinking in large vision-language models that shows more reasoning is not always better, then introduces uncertainty-guided lookback to improve visual grounding and decoding performance.

What to Do Next? Memorizing skills from Egocentric Instructional Video

Jing Bi, Yunlong Tang, Chao Huang, Chenliang Xu

A memory-based approach for interactive action planning from egocentric demonstrations that models affordances to select contextually appropriate actions and detect deviations during task execution.

Perceptual Copilot

An experimental prototype that integrates OpenAI agents with visual tools to process real-time video streams.

I2G: Generating Instructional Illustrations via Text-Conditioned Diffusion

Jing Bi, Pinxin Liu, Ali Vosoughi, Jiarui Wu, Jinxi He, Chenliang Xu

A language-driven framework for turning procedural text into coherent visual instructions using structured text encoding, discourse coherence modeling, and diffusion-based generation.

Why Reasoning Matters? A Survey of Advancements in Multimodal Reasoning

Jing Bi, Susan Liang, Xiaofei Zhou, Pinxin Liu, Junjia Guo, Yunlong Tang, Luchuan Song, Chao Huang, Ali Vosoughi, Guangyu Sun, Jinxi He, Jiarui Wu, Shu Yang, Daoan Zhang, Chen Chen, Lianggong Bruce Wen, Zhang Liu, Jiebo Luo, Chenliang Xu

A comprehensive survey examining reasoning techniques in both textual and multimodal LLMs, addressing the challenge of integrating visual and textual inputs while resolving ambiguities across modalities.

VERIFY: A Benchmark of Visual Explanation and Reasoning for Investigating Multimodal Reasoning FidelitY

Jing Bi*, JunJia Guo*, Susan Liang*, Guangyu Sun, Luchuan Song*, Yunlong Tang*, Jinxi He*, Jiarui Wu*, Ali Vosoughi*, Chen Chen, Chenliang Xu*

The first benchmark explicitly designed to assess the reasoning path of MLLMs in visual reasoning tasks with novel metrics that assess reasoning fidelity beyond accuracy.

Unveiling Visual Perception in Language Models: An Attention Head Analysis Approach

Jing Bi, Junjia Guo, Yunlong Tang, Lianggong Bruce Wen, Zhang Liu, Chenliang Xu

Novel attention mechanisms for improving visual perception in deep learning models with focus on spatial and temporal dynamics through attention head analysis.

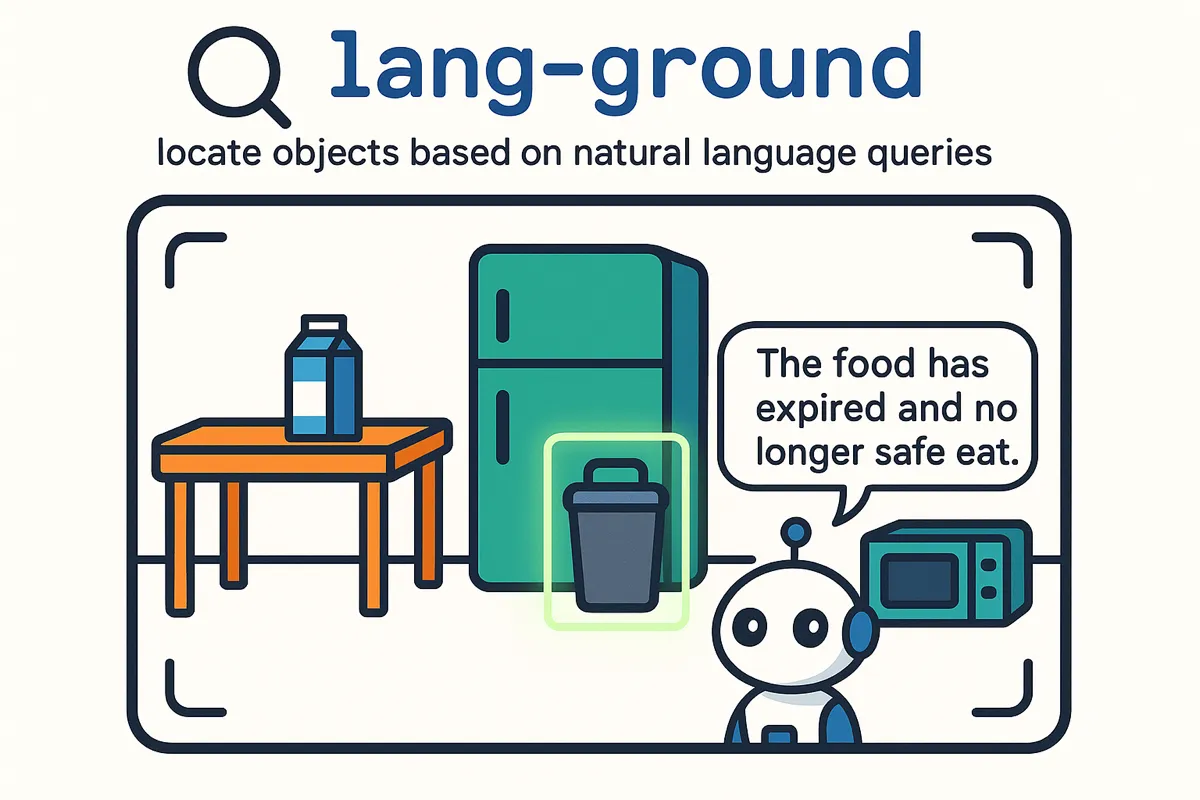

Language Grounding

Use natural language to localize and track objects in real-time with advanced computer vision.

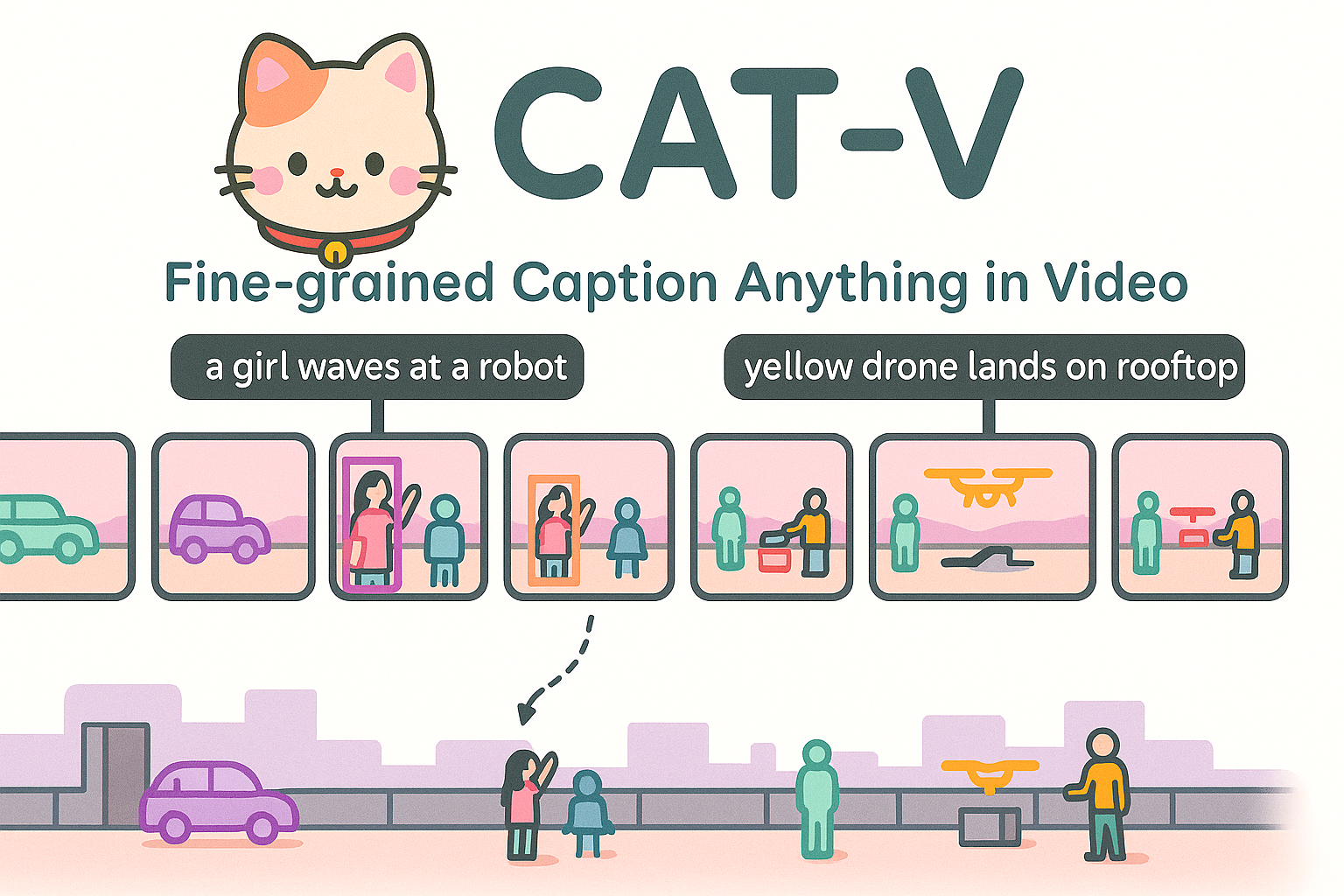

CAT-V

A comprehensive computer vision toolkit for advanced image and video analysis with state-of-the-art algorithms and processing capabilities.

AVicuna: Audio-Visual Conversation Understanding

Yunlong Tang, Daiki Shimada, Jing Bi, Mingqian Feng, Hang Hua, Chenliang Xu

A multimodal large language model capable of aligning audio-visual events with temporal intervals and text tokens. Built on PU-VALOR dataset with over 114,000 pseudo-untrimmed videos, AVicuna excels in temporal localization and time-aware dialogue capabilities for audio-visual understanding.

OSCaR: Object State Captioning and State Change Representation

Nguyen Nguyen, Jing Bi, Ali Vosoughi, Yapeng Tian, Pooyan Fazli, Chenliang Xu

A comprehensive dataset and benchmark for evaluating multimodal large language models on object state captioning and state change representation. OSCaR consists of 14,084 annotated video segments with nearly 1,000 unique objects from various egocentric video collections, setting a new testbed for understanding dynamic environments and object state changes.

EAGLE: Egocentric AGgregated Language-video Engine

Jing Bi, Yunlong Tang, Luchuan Song, Ali Vosoughi, Nguyen Nguyen, Chenliang Xu

A video-based multimodal large language model fine-tuned for egocentric video content using comprehensive EAGLE-400K dataset comprising 400K visual instruction-tuning data from diverse sources.

Video Understanding with Large Language Models: A Survey

Yunlong Tang, Jing Bi, Siting Xu, Luchuan Song, Susan Liang, Teng Wang, Daoan Zhang, Jie An, Jingyang Lin, Rongyi Zhu, Ali Vosoughi, Chao Huang, Zeliang Zhang, Feng Zheng, Jianguo Zhang, Ping Luo, Jiebo Luo, Chenliang Xu

A comprehensive survey examining the emergent capabilities of Vid-LLMs in video understanding, covering open-ended multi-granularity reasoning and categorizing approaches into three main types: Video Analyzer x LLM, Video Embedder x LLM, and (Analyzer + Embedder) x LLM.

Streamem

A streaming application platform for real-time content delivery and management with modern web technologies.

MISAR: A Multimodal Instructional System with Augmented Reality

Jing Bi*, Nguyen Manh Nguyen*, Ali Vosoughi*, Chenliang Xu

An innovative augmented reality system that harnesses LLMs to assimilate information from visual, auditory, and contextual modalities, focusing on task performance quantification in AR through egocentric video, speech, and context analysis.

AR AI Assistant

An advanced AI research and implementation platform showcasing cutting-edge artificial intelligence techniques and applications.

Automatic Differentiation

A repository for exploring and implementing automatic differentiation algorithms, enabling efficient computation of derivatives for scientific computing.

Procedure Planning in Instructional Videos via Contextual Modeling and Model-based Policy Learning

Jing Bi, Jiebo Luo, Chenliang Xu

Novel approach combining Bayesian Inference and Model-based Imitation Learning to learn goal-directed action planning from instructional videos, capturing both long-term action associations and short-term action separations.

Learning from Interventions Using Hierarchical Policies for Safe Learning

Jing Bi, Vikas Dhiman, Tianyou Xiao, Chenliang Xu

Hierarchical policy framework that addresses expert reaction delay in Learning from Interventions (LfI) through novel backtracking interpolation and sub-goal prediction for safe autonomous learning.

Get in Touch

Always interested in collaborations, and new ideas. Feel free to reach out!